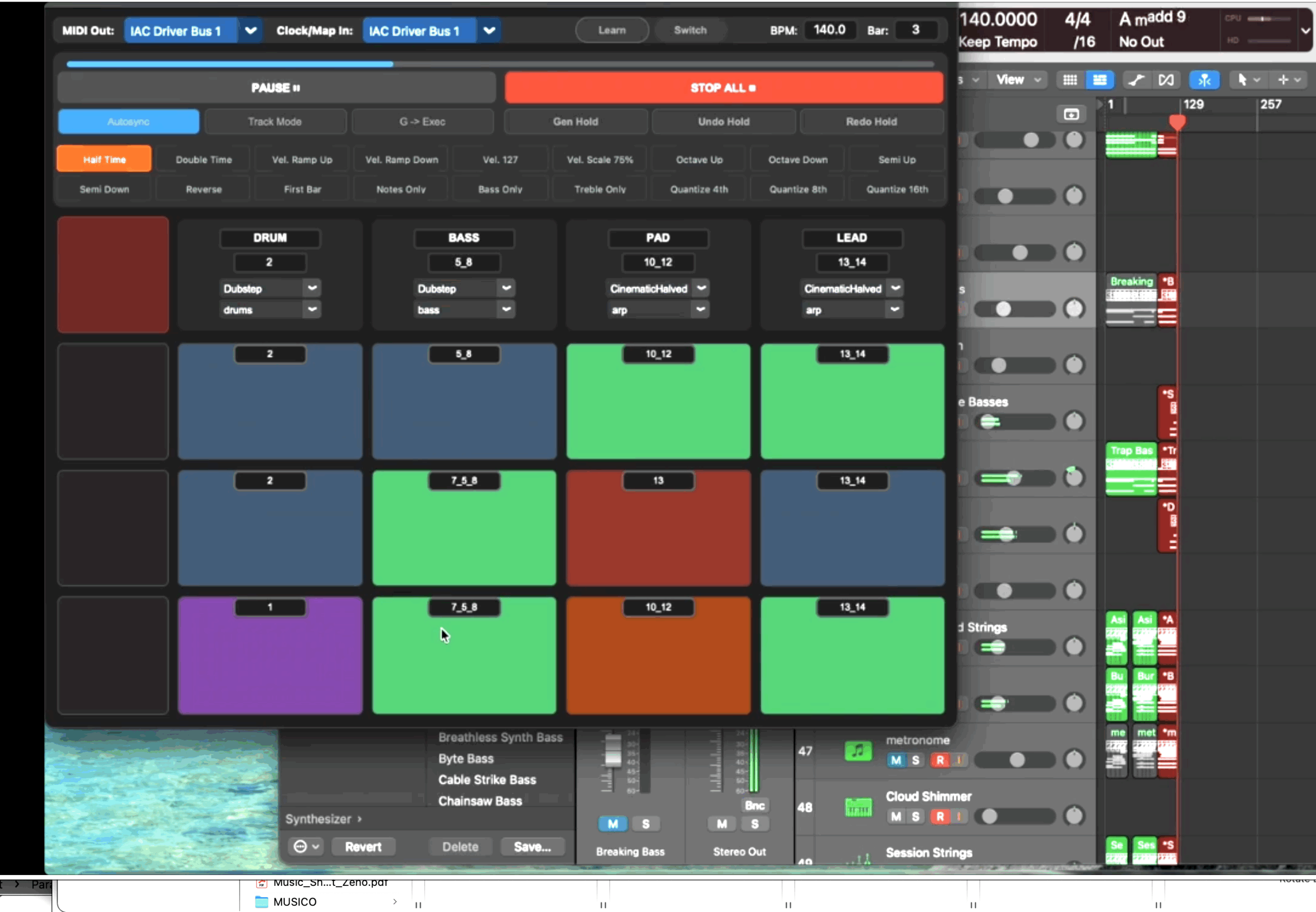

get the GenLooper

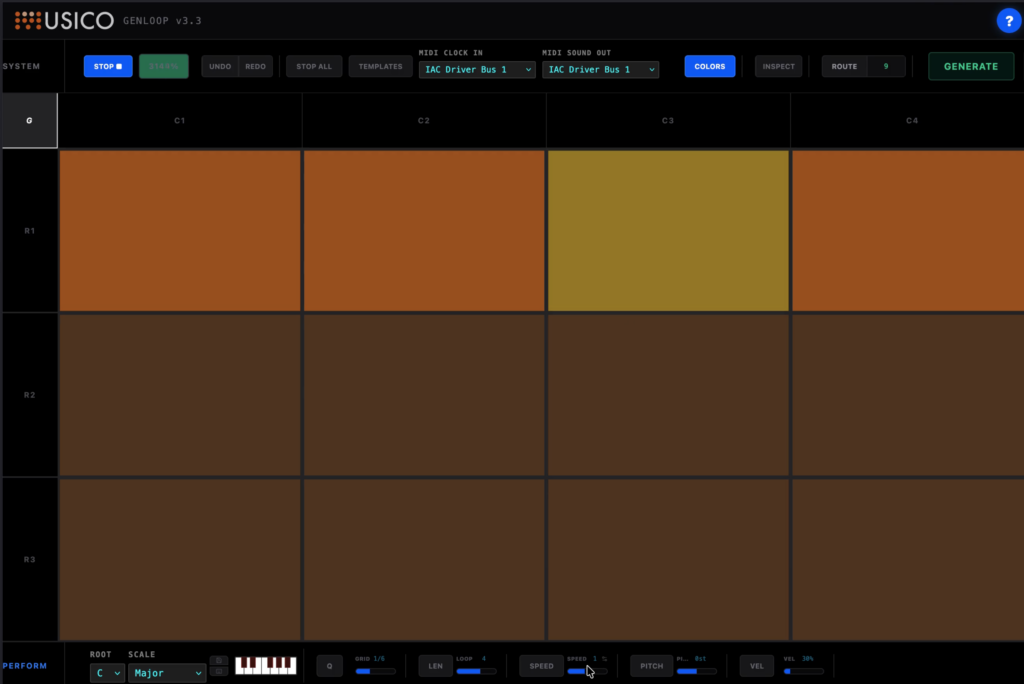

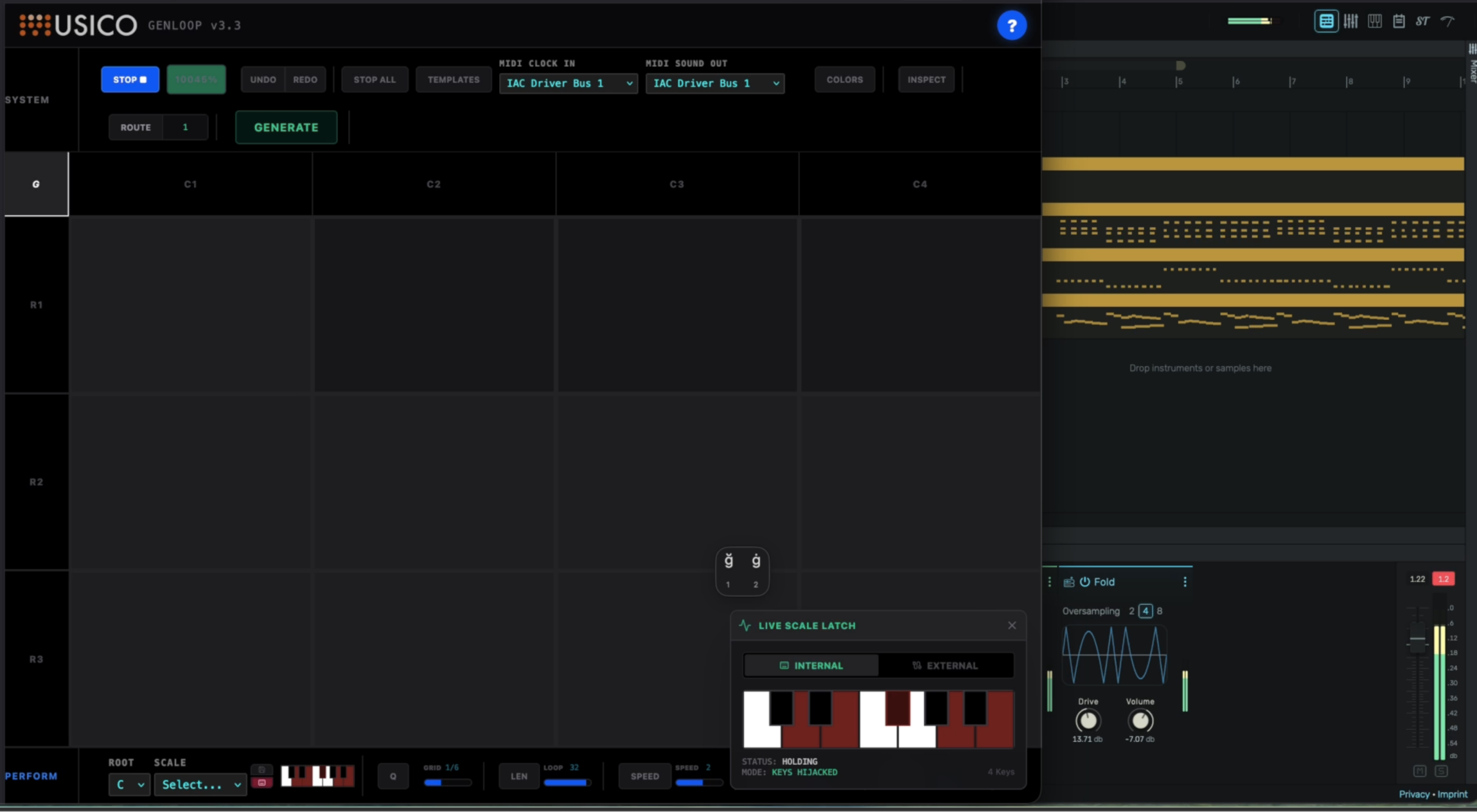

GenLooper: Redefining AI Usability with Granular MIDI Control

In the world of AI-assisted composition, the gap between “random generation” and “human intent” is finally closing.

Our latest usability test for GenLooper explores this frontier, using a specialized classical MIDI dataset to redefine how we interact with symbolic music.

The Scarlatti “Stress Test”

For this study, we utilized a high-fidelity MIDI dataset of Domenico Scarlatti’s works, curated by MUSI-CO and released into the public domain for scientific research.

Scarlatti’s harpsichord sonatas—famous for their intricate textures and rapid rhythmic shifts—provide the perfect “stress test” for our generative models.

Once we achieved consistent Scarlatti-like generations, we applied two layers of advanced control:

- Post-Generated Midi Music Manipulation

Traditional Audio Generative Models often suffer from a “black box” output where the user has little say in the final result.

In our GenLooper workflow based on Ai Midi Music generation, we apply post-generated midi transformation and functional articulation.

This allows us to take a raw sequence and re-condition it through a secondary layer, ensuring the output adheres to the structural logic of the Scarlatti dataset while remaining unique. A prominent example is real time harmonic control through the keyboard – the pitch of the generated notes is „corrected“ to the specific set of notes which are pressed on the keyboard, allowing the musician to shape the harmony of the whole composition in real time.

- Statistical Granular MIDI Control

Rather than just tweaking volume or tempo, users can apply statistical filters to:

Density Mapping: Controlling the number of events per beat to mirror Scarlatti’s rapid-fire “acciaccatura” style.

Velocity Sculpting: Applying granular curves to note-on events for human-like phrasing.

Pattern Shaping: Using granular control to influence the “form” of a generated sequence before it is finalized.

From Generation to Classification

To ensure the AI produces art, not just noise, all patterns pass through a compositional filter using MIDI feature extractors and classifiers. This process allows the system to:

- Extract Key Features: Analyzes rhythmic complexity, pitch range, and note density.

- Stylistic Classification: Groups sequences based on their alignment with Scarlatti-inspired forms.

- Intentional Selection: Filters for “compositionally-intended” sequences, ensuring the final output feels like a deliberate artistic choice.

Research Note: By integrating these classifiers, we move away from “generative luck” toward a system that understands the musicality of its own creation.

The Human-in-the-Loop

This test proves that the future of AI music isn’t just about massive datasets—it’s about informed selection and granular control over AI proliferation. At MUSI-CO, the artist is always in the loop.

![Musico and Soundive united present: the Generative Ai Show [the Music chapter]](https://www.musi-co.com/wp-content/uploads/2023/11/LINEA_event_polisketch.010.jpeg)